The Importance of Random Learning

Ahead of the upcoming release of V49, Volume presents several articles straight from our archives to stimulate a renewed discussion on the themes contained in the new issue.

In April 2010 Cloud Lab visited the Asada Synergistic Intelligence Project, a part of the Japanese Science and Technology Agency’s ERATO project. In an anonymous meeting room surrounded by cubicles we met with Hiroshi Ishiguro to talk about the future of robotics, space and communications. Ishiguro, an innovator in robotics, is most famous for his Geminoid project, a robotic twin he constructed to mimic his every gesture and twitch.

Dressed in a black uniform, Ishiguro’s presentation is as matter-of-fact as his surroundings: robots will be everywhere in the future and he wants to make sure the future of communications is as human-centric as possible. Splitting his time between leading the Socio- Syngeristic-Intelligence Group at Osaka University and his position as Fellow at the Advanced Telecom munications Research Institute, his research interests are tele-presence, non-linguistic communication, embodied intelligence and cognition.

Cloud Lab: If a robot has a human-like appearance, do people expect humanlike intelligence?

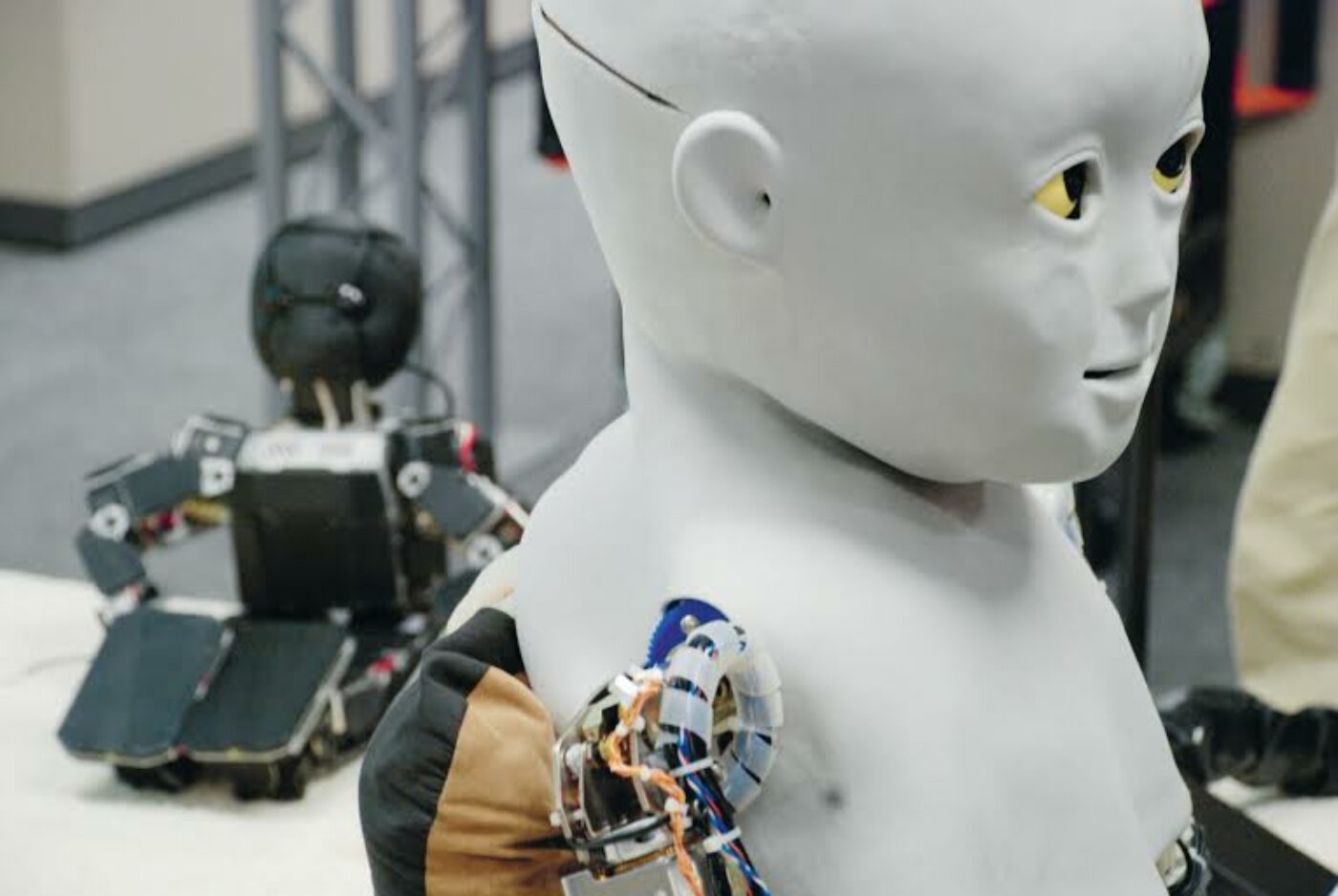

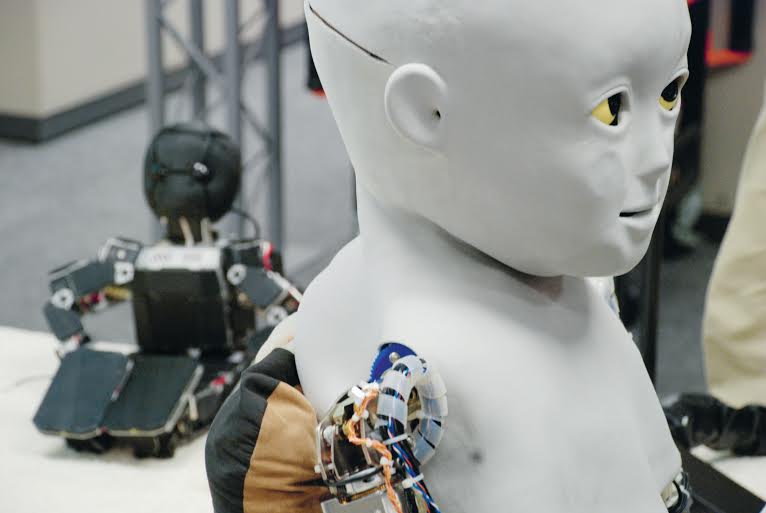

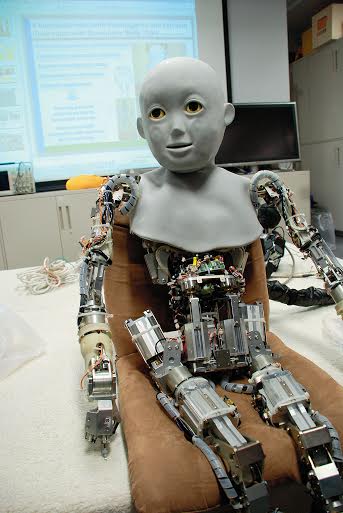

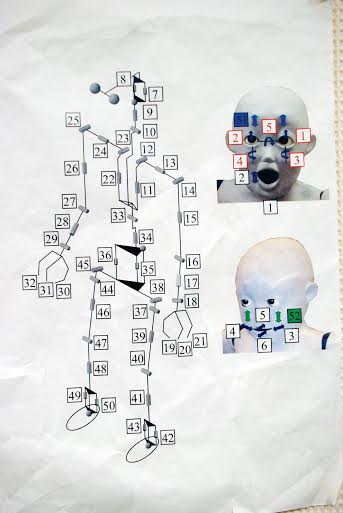

Hiroshi Ishiguro: If a robot has a human-like appear ance, then yes, people expect humanlike intelligence. The robot is a hybrid system, a mix of controlled and autonomous motion. For instance, eye and shoulder movements are autonomic. We are always moving in a kind of un conscious movement. That kind of movement is automatic, and the conversation we’re having is dependent on these movements. At the same time, we can connect the voice to an operator on the internet, so we can have a natural conversation. I can recognize this android as my own body, and others recognize it as me. But others can adopt my body and learn to control it as well. A robot is a very good tool for understanding humans but they’re not easy to make. Human-like robots can be so complicated we cannot use the traditional understanding of robotics. In order to realize a surrogate, or a more human-like robot, we need other tools. For example, the Honda Asimo uses a very simple motor, es entially a rotary motor – but it’s not human. The human is actually a series of linear actuators. With this kind of actuator we can make a more complex, more human robot … In a traditional process, we would train or develop each part and then put them together into an integrated robot. In this project we train the entire system at the same time. If the robot has a very complicated body it is difficult to properly control [and coordinate] all its movements. Therefore the robot needs help from a care giver, a mother – in this case, my student. My student is teaching the robot how to stand up. This way we can understand which actuators are important for standing up. There are very big differences in robotic and human systems. For instance, the human brain only needs 1 watt of energy while a super computer requires 50,000 watts. Why do we have this big difference? The reason is that the human brain makes good use of noise. I can try to explain how the biological system uses noise. At a molecular level everything is a gradient, but for the computer we are suppressing noise and expending energy – we are making the binary mistake. That system takes a lot of energy. In traditional engineering, the most important principle is how to sup press noise. The next system, or more intelligent or complex system, will fi gure out how to utilize noise, like a biological system. We are working with biologists and we have developed this fundamental equation. We call this the Yurangi formula, which means biological fluctuation. A [kinematic skeleton] is the traditional system. But if we have a very complicated robot, if a robot moves in a dynamic environment, we can’t develop a model for that environment. If we watch a biological system, for instance, insects or humans, we see a model that can respond to a dynamic world and control a complicated body. We don’t know how many muscles we have, yet we learn to use our bodies very well. We are using noise [to learn and adapt], for instance, Brownian noise (though the biological system employs many different kinds of noise).

CL: When the computer suppresses noise and expends energy it is expending a lot of energy.

HI: So we need to modify our models to incorporate noise. This creates a kind of balance-seeking, where noise and the model control together. If the model fails, then noise takes over. The robot doesn’t need to know how many legs or sensors it has. It needs to start with random movements, both small and large. We can apply these same ideas to a more complicated robot. We have developed a robot with the same bone structure and muscle arrangement as a human, yet with such a complicated robot we still cannot solve the inverse kinematics equations that determine movement. Instead we control it through random movements. The robot can estimate the distance between its hand and a target. If the distance is long, the robot will begin to randomly move its arm around in a large-scale noise pattern, across all actuators. Eventually it will find the target and the noise will be suppressed. It does this without ever knowing its own bone structure. We can relate this to the human baby. A baby has many random movements; it looks like a noise equation. Yet it develops a series of behaviors that allow it to control its own body. Babies run these noise-based automatic behaviors. Employing this we can build a more human-like surrogate.

CL: As architects we are curious whether responsiveness – or the feeling of presence – is something that can be integrated into the architecture and space?

HI: [Robotics researchers] call it body propriety and it is quite important for everything. Appearance is also important. The human relationship is based on human appearance. Basically we want to see a beautiful woman, right? Appearance is very important for everything, that is why I have started the android project. Until now robot researchers only focused on how to move the robot and did not design its appearance. Every day you check your face, not your behavior. They are very different.

CL: Do you think the robot can be emotive, resembling the human? Can it be expressive without having the physical character of the human being? For example, Ibo or Asimo (Sony)?

HI: Emotion, emoting, objective function, intelligence, or even consciousness is not the objective. I function, I’m subjective, [so] you believe I’m intelligent right? Where is the function of consciousness or emotion? We believe by watching your behavior that you have consciousness or emotion. She has emotions and believes I have emotions therefore we just believe that we have emotions and consciousness. Following from that, the robot can have emotion, because it can have eyes. Can you have drama in robotics? I worked on the robot drama I am the Worker by Oriza Hirata. We used the robots as actresses and actors in scenarios with human actors. The human actors don’t need to have a humanlike mind. The director’s orders are very precise, like ‘move forty centimeters in four seconds’. But actually we can feel human emotions in the heart when watching this drama. I think that is the proper understanding of emotion, consciousness and even the heart.

CL: In a sense, the body is the last frontier of innovation. Despite the context of many technologies (for instance, the rapid incorporation of cell phones for communications) extending the human body, the actual manipulation of the body itself remains taboo. There is a debate on the ethics of changing bodies. With whom do you identify with in this debate: the engineer, philosopher, priest?

HI: My main interest is the human mind and why emotional phenomenon appears in human society. Robots reflect and explore that human society. My next collaboration is with a philosopher, actually two post-docs from philosophy. I am trying to develop a model in social relationships. I believe we can model human dynamics – we cannot watch just one person in understanding emotion, right? We need to watch the whole society and develop models of that society – that is very important. Today we don’t have enough researchers approaching the human model in robotics, trying to establish the relationship between robots and human beings.

CL: In terms of working method, the laboratory is a very controlled space – but what feedback have you been getting in terms of robot deployment in spaces that sponsor good interactions, for instance, in malls, hospitals, large spaces, small spaces, etc.?

HI: The real fundamentals come from the fi eld, in interactive robots. We are getting a lot of feedback. In order to have this kind of system, we need sensors. We can’t just use the sensors from the robots. It is not enough to [compute and plan] out the necessary activities. We have developed our own sensor networks with camera/laser-scanners and our system is pretty robust. The importance of the teleoperation is through fi eld testing. People ask the robot difficult questions and that is natural. Before that I developed some autonomous robots – but I gave up that [research direction] and focused on the teleoperation – which is good for collecting data. Using teleoperation the robot can gather data on how people behave and react. Then we can gather the information and make a truly autonomous robot.

CL: Our behaviour is very different depending on the space. We operate differently from space to space. Is it not something designed into the robot?

HI: That is why we developed telecommunication. If we control the robot we control the situation. We can gather information and develop more autonomous robots. We are in a gradual development process for the developing robot… Evolutionary processes are important and should happen, but evolution is driven by humans. In my laboratory we are building a new robot; we are improving the robot. That is the evolution. Evolution is quite slow. The current evolution of humanity is done through technology. By creating new technologies we can evolve. We can evolve rapidly.

CL: Parallel to evolution, the child robot you were showing us had to be physically trained to move by a trainer.

HI: That is development, not evolution.

CL: But it is employing a certain kind of machine learning, so that as it is trained over time it can perform these functions by itself. Do you not see that as a kind of evolution?

HI: That is development. Evolution is different. For example, a robot would have to be designed through genes – that is evolution. But even for the developmental robot, you have to give a kind of gene, its program code.

CL: Have you experimented with genetic programming?

HI: We are using genetic programming but only in the context of very simple creatures, such as insects. Our main purpose is to have a more human-like robot and we cannot simulate the whole process of human evolution.

CL: What do you think are the limits of the Geminoids? Technology is always extending our capabilities, but is the Geminoid extending us or are we still extending it?

HI: People typically expect the Geminoid to be able to manipulate something. Actually, the Geminoid is just for communication. Physically it is weak. The actuators themselves are not powerful enough to manipulate much. The Geminoid is a surrogate, where as the manipulation of objects can be accomplished with another mechanism.

CL: What is the limit case of the technology then? If you are no longer physically present in a space your robot can do anything. Is this kind of freedom a goal?

HI: The goal is ultimately to understand humans. On the other hand we can’t stop technological development in the near future. We have to seriously consider how we should use this technology as a society. My goal is still to really think through these issues of technology, which is still far behind the real understanding of the human body. Today we don’t see this kind of humanoid robot in a city, but we see many machines, for instance, the vending machines found in the Japanese rail system. The vending machine talks, says hello. It’s impossible to stop the advancement of this kind of technology; that is human history. The robots we develop always find their source in humanity. We walk, so locomotion technologies are important. We manipulate things with our hands, so manipulation is important. We are not replacing humans with machines, but we are learning about humans by making these machines.